Data Management Glossary

AI Compute

What is AI Compute?

AI compute refers to the computational resources required to run artificial intelligence workloads, including training machine learning models, processing data, and performing inference. These resources typically include GPUs, CPUs, specialized accelerators (e.g., TPUs), and cloud or on-prem infrastructure.

AI compute powers every stage of the AI lifecycle:

- Training: Processing massive datasets to build models

- Inference: Running models on new data to generate predictions

- Data processing: Preparing, filtering, and transforming data before use

AI compute environments can include:

- Cloud platforms (AWS, Azure, GCP)

- On-prem GPU clusters

- Edge computing systems

The amount of compute required for AI depends on:

- Data volume

- Model complexity (e.g., LLMs vs traditional ML)

- Frequency of inference and retraining

Latest Trends in AI Compute

AI compute is rapidly evolving due to the rise of generative AI and large-scale data pipelines:

1. Exploding Demand for Compute

- Large language models (LLMs) require massive GPU clusters

- Enterprises are competing for limited GPU and high-performance infrastructure

2. Rising Costs

- AI compute is now one of the largest cost drivers in IT

- Costs are driven not just by compute—but by data movement, storage, and inefficiencies

3. Shift Toward Data Efficiency

Organizations are realizing that the biggest waste in AI compute is processing irrelevant or low-value data. This has led to:

- Data curation before training

- Filtering redundant, obsolete, and trivial (ROT) data

- Smarter data pipelines

4. Hybrid AI Infrastructure

- AI workloads span on-prem + cloud + edge

- Data is distributed across storage systems, increasing complexity

5. Rise of Data-Centric AI

Focus is shifting from: Bigger models” → to → “better data”

This makes:

- Data discovery

- Metadata

- Data quality

…just as important as compute itself.

The Hidden Problem: AI Compute Waste

A major challenge with AI compute is inefficiency. Unstructured data is often:

- Duplicated

- Outdated

- Irrelevant

AI pipelines frequently process entire datasets instead of curated subsets. The result is:

- Wasted GPU cycles

- Higher cloud and infrastructure costs

- Lower model accuracy

How AI Compute Relates to Unstructured Data

Most enterprise data used in AI is unstructured:

- Documents

- Images

- Videos

- Emails

- Logs

AI success depends on finding, curating, and preparing the right data. Without proper unstructured management:

- AI compute is spent on noisy, low-value data

- Data pipelines become inefficient and expensive

Learn more about the Komprise AI data management approach and AI Data Management.

How Komprise Optimizes AI Compute

Komprise helps organizations reduce AI compute waste and improve efficiency by ensuring only the right data is processed.

Key capabilities include:

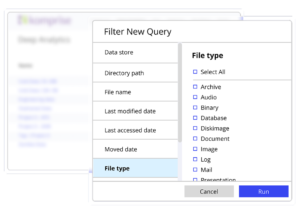

- Indexes metadata across all file and object unstructured data

- Provides a unified view across NAS, cloud, and object storage

- Enables precise data discovery and filtering

Data Discovery, Curation and Classification

- Identify high-value datasets for AI

- Filter out redundant, obsolete, and irrelevant data

- Improve dataset quality before compute is applied

- Automate data movement into AI pipelines

- Deliver only relevant data to compute environments

- Avoid unnecessary data transfer and processing

Serverless Compute for Data Preparation (KAPPA)

- Run metadata enrichment and data prep at scale

- Avoid building custom infrastructure

- Prepare data efficiently before AI workloads run

Why This Matters

AI compute is expensive, but data inefficiency is what makes it costly. Komprise shifts the model from:

“Throw more compute at the problem”

to:

“Send only the right data to compute”

Why is AI compute so expensive?

Because of high demand for GPUs, large datasets, and inefficient data pipelines that process unnecessary data.

What impacts AI compute costs the most?

Data volume, data quality, and how efficiently data is curated before processing.

How can organizations reduce AI compute costs?

By filtering and preparing data before it enters AI pipelines, reducing the amount of data processed.

Read the Komprise Guide to AI Data Preparation.

How does Komprise help optimize AI compute?

Komprise identifies and delivers only relevant, high-value data to AI pipelines using its Global Metadatabase and analytics-driven unstructured data management workflows.

AI compute powers modern AI, but without proper data management, it becomes inefficient and expensive. Komprise ensures that AI compute is used effectively by curating, optimizing, and delivering the right unstructured data to AI pipelines, reducing cost and improving outcomes.

Unstructured data management solutions and unstructured data workflows are increasingly being used to improve storage efficiencies and speed time to value for AI.

What is unstructured data in AI?

AI needs unstructured data – are you ready?

Read the Duquesne University case study to learn more about Komprise Smart Data Workflows and AWS Rekognition for rapid image search across petabytes of data.