Are you measuring the right things for unstructured data management and file storage?

This article has been adapted from its original version on VentureBeat.

The explosion of unstructured data and the diversity in data types today is bringing a host of new challenges for enterprise IT departments and data storage professionals. These include escalating file storage and backup costs, management complexity, security risks, and an opportunity gap from hindered visibility.

It’s not enough to shoot in the dark anymore. IT leaders need new, smart analytics and metrics which go beyond legacy storage indicators to understand data which leads to cost savings and better compliance. These metrics should also include measures to track and improve energy consumption to meet broader sustainability goals, which are becoming critical in this age of cyclical energy shortages and climate change.

First, let’s review what storage metrics IT departments have traditionally tracked:

Legacy storage IT metrics

Over the last 20-plus years, IT professionals in charge of data storage tracked a few key metrics primarily related to hardware performance. These include:

- Latency, IOPS and network throughput

- Uptime and downtime per year

- RTO: Recovery point objective (time-based measurement of the maximum amount of data loss that is tolerable to an organization)

- RPO: Recovery time objective (time to restore services after downtime)

- Backup window: Average time to perform a backup

The new metrics: Data-centric versus storage-centric

In today’s world, where data is the center of decisions, there are a host of new data-centric measures to understand and report beyond traditional IT infrastructure metrics. IT leaders need insights to inform cloud data management strategies. Departments and business unit leaders are increasingly responsible for monitoring their own data usage — and often paying for it. Discussions with IT organizations can be contentious when, while IT is trying to conserve spend and free up capacity, business leaders are uneasy about archiving or deleting their own data. These metrics, which are standard in Komprise, help bridge the gap:

Storage costs for chargeback or showback: Even if a department doesn’t participate in a chargeback model, stakeholders should understand costs and be able to drill down into metrics. They can identify areas where tiering to cold data storage can be applied to reduce spend.

Storage costs for chargeback or showback: Even if a department doesn’t participate in a chargeback model, stakeholders should understand costs and be able to drill down into metrics. They can identify areas where tiering to cold data storage can be applied to reduce spend.

Data growth rates: Overall trending information keeps IT and business heads on the same page so they can collaborate on new ways to manage explosive data volumes. Stakeholders can drill down into which groups and projects are growing data the fastest and ensure that data creation/storage is appropriate according to its overall business priority.

Age of data and access patterns. Most organizations have a large percentage of “cold data” which hasn’t been accessed in a year or more. Metrics showing percentage of cold versus warm versus hot data are critical to ensure that data is living in the right place at the right time according to its business value and to meet savings goals.

Age of data and access patterns. Most organizations have a large percentage of “cold data” which hasn’t been accessed in a year or more. Metrics showing percentage of cold versus warm versus hot data are critical to ensure that data is living in the right place at the right time according to its business value and to meet savings goals.

Top data owners/users: This can show trends in usage and indicate any policy violations, such as individual users storing excessive video files or PII files being stored in the wrong directory. Surveys show that compliance and data governance are becoming a top priority in data management.

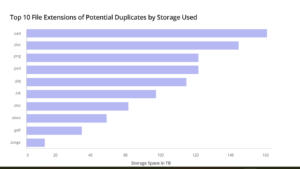

Common file types: A research team collecting data from certain applications or instruments may not know how much they have or where it’s all stored. The ability to see data by file extension can inform future research initiatives. This could be as simple as finding all the log files, trace files or extracts from a given application or instrument and moving them to an analytics tool.

Getting this data requires a way to find and index data across vendor boundaries, including cloud providers, using a single pane of glass. Collating data between all your storage providers to get these metrics is possible yet manually intensive and error-prone. Independent data management solutions such as Komprise, with the help of our Global File Index, can help achieve these deeper and broader analytics goals.

New metrics for sustainable data management

Another core set of needed metrics for IT infrastructure teams relates to energy use, a growing mandate across all sectors. Managing data and IT responsibly is no small facet of sustainability programs.

A report by Schneider Electric found that IT sector electricity demand will grow 50% by 2030.

Most organizations have hundreds of terabytes of data which can be deleted but are hidden and/or not understood well enough to manage appropriately. Storing rarely used and zombie data on top-performing Tier 1 storage (whether on-premises or in the cloud) is not only expensive but consumes the most energy resources. The sustainability-related data management metrics below can help measure and reduce energy consumption as relates to data storage. Komprise delivers all of these metrics today!

Last access time and creation time: Data access and age metrics can inform decisions about moving data to a lower-carbon storage location such as cloud object storage.

Duplicate data reduced: Deleting data that is not needed naturally lowers the storage footprint and energy usage. Often, especially in research organizations, datasets are replicated for different experiments and tests but never deleted.

Duplicate data reduced: Deleting data that is not needed naturally lowers the storage footprint and energy usage. Often, especially in research organizations, datasets are replicated for different experiments and tests but never deleted.

Data stored by vendor: Legacy storage technology (RAID, SAN, tape) is more wasteful in general, which is why SSD and all-flash storage has been growing quickly. Newer storage technologies are much more efficient than spinning disks, reducing power consumption. Understanding the percentage of data stored on legacy solutions is a starting point toward defining how and when to upgrade to more modern technology, including cloud storage.

———-

Why New Metrics Matter

Investing in new initiatives to expand metrics programs requires time, resources and money. Doing so can inform cost-effective and sustainable unstructured data management decisions — easily cutting spending and energy usage by 50% or more. Furthermore, data consumers gain detailed insights into their data and reduce the amount of time spent searching for data. An estimated 80% of the time spent conducting AI and data mining projects is spent finding the right data and moving it to the right place.

Want to learn more about metrics you can see with Komprise? Check out this post on Komprise Analysis.

Want to learn what’s new with Komprise unstructured data reporting? Check out this post.

Randy Hopkins is VP of global systems engineering and enablement at Komprise.